Sentinel-5P irradiance measurement animation

As part of the commissioning phase of the TROPOMI instrument on board of the ESA’s Sentinel-5P spacecraft, a dedicated radiometric measurement was put in place to estimate radiometric errors in Level 1B products based on S5P early in-flight calibration measurements.

Simulations of atmospheric transmission spectra showed that the atmosphere is opaque at tangent altitudes below 15-10km, near the O2 A Band at 761 nm.

Measurements in this range, taken in a so-called solar occultation geometry, could potentially be used to detect residual out-of-band stray light effects.

In this post, we are going to show how to use Matplotlib to display an animated plot of the solar irradiance spectra detected during this dedicated solar calibration measurement, as a function of time – which roughly translates in decreasing limb tangent heights — and observe the strong O2 A Band absorption features occurring around 761 nm.

Loading the data

Let’s import our favourite libraries and load the data from our input product.

1 | import dateutil.parser |

Our data are contained in a Sentinel-5P L1B product, containing both measurements and instrument data in a NetCDF4 file.

Let’s open it and extract the variable we are interested in:

irradiance, that is the measured spectral irradiance for each spectral pixel and each associated spectral channel, across all scanlines (which is our time dimension). Irradiance is a measurement of solar power and is defined as the rate at which solar energy falls onto a surface.calibrated_wavelength, a calibrated set of wavelengths derived from the comparison between the obtained irradiance measurement and a reference solar spectrum, which provides a per pixel best estimate for the wavelength actually measured by each individual spectral channel.

1 | input_dir = '/Users/stefano/src/s5p/products' |

The scanline relative timestamps can be derived by adding the delta_time values, expressed as milliseconds from the reference time, to a time_reference value.

We are going to make use of the dateutil module to parse the time_reference attribute, which is an UTC time specified as an ISO 8601 date, and then build an array of relative time stamps that can be indexed by our scanlines.

1 | time_reference = dateutil.parser.parse(l1b.time_reference) |

Reference time: 2017-11-19 00:00:00+00:00

Making sense of the data

Let’s have a closer look at our irradiance variable:

1 | print(irradiance) |

<class 'netCDF4._netCDF4.Variable'>

float32 irradiance(time, scanline, pixel, spectral_channel)

ancilary_vars: irradiance_noise irradiance_error quality_level spectral_channel_quality

_FillValue: 9.96921e+36

units: mol.m-2.nm-1.s-1

long_name: spectral photon irradiance

path = /BAND6_IRRADIANCE/SOLAR_IRRADIANCE_SPECIAL_MODE_0266/OBSERVATIONS

unlimited dimensions:

current shape = (1, 2772, 504, 520)

filling on

Which, speaking human, tells us that for each of the 2772 scanlines, there are 504 pixels and 520 associated spectral channels. Each pixel in this tridimensional grid specifies an irradiance measurement represented as a float value.

We want to “slice” the measurements around the area of interest: O2 A band at 761 nm, for example in the 756:772 nm range.

Let’s consider a pixel – our reference pixel for the remainder of this post – in the centre of the swath, and its associated spectral channels, and let’s use NumPy’s where function to retrieve the spectral channel range enclosing the wavelengths we are interested in.

1 | PIXEL = 252 |

Spectral channel range: (254, 384)

Plotting the data

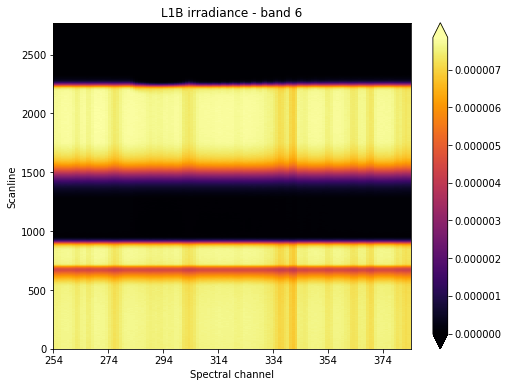

Now that we know at which spectral channels we need to look at, let’s have a quick look at the irradiance level evolution across all scanlines, that is with respect to the spacecraft relative position to the sun.

For that, we are going to make use of Matplotlib’s pcolormesh function.

1 | fig, ax = plt.subplots(figsize=(8,6)) |

We want to focus our attention at what’s happening around scanline 2250, which seems to be around the time where the sun “sets” from the point of view of our instrument’s line of sight.

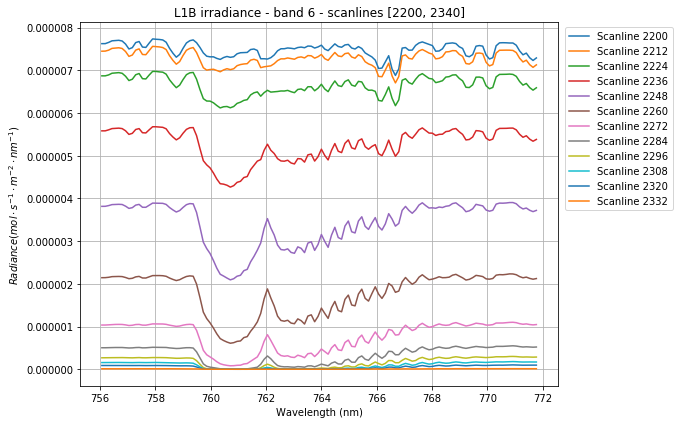

We could then slice our original irradiance along the scanline and spectral channel axes. At the same time, we could improve our signal to noise ratio, by averaging measurements around our reference pixel. This will reduce our total number of dimensions by one.

1 | SCANLINE_MIN, SCANLINE_MAX = (2200, 2340) |

We could also slice our calibrated_wavelength array around our reference pixel.

1 | calibrated_wavelength = calibrated_wavelength[0,PIXEL,:] |

Let’s now plot our averaged irradiance for a subset of scanlines:

1 | fig, ax = plt.subplots(figsize=(8,6)) |

We can clearly notice how the irradiance gets squashed as the sun gradually drops out of sight. Also, the effect of the O2 absorption is distinctly visible around 761nm.

How cool would it be to animate this sequence?

Animating the data

It turns out that we can easily setup animations in Matplotlib: we just need to set up our plot first, and then define a function specifying the frame to be plotted at the ith step in the animation.

1 | fig, ax = plt.subplots(figsize=(8,6)) |

Next, we are going to pass the just defined animate function to FuncAnimation, which is Matplotlib’s function used to make an animation by repeatedly calling a function. We need to specify the number of frames and the delay between frames in milliseconds. The blit parameter tells the function to re-draw only the parts of the frame that have changed.

1 | anim = animation.FuncAnimation(fig, animate, |

Next, we are going to make use of IPython’s inline display tools to display our animation within the Jupyter notebook.

1 | HTML(anim.to_html5_video()) |

We can now save our animation.

1 | anim.save(join(output_dir, 'irradiance_animation.mp4')) |

How can we add a bit of life in our animation? What about animating a pcolormesh plot of the irradiance over a rolling window of a given number of scanlines? Now that would be cool!

We are going to start by defining a few parameters defining the scope of the animation, and by retrieving our original irradiance variable and re-slicing it to increase the scanline range.

1 | SCANLINES_PER_FRAME = 200 |

We defined a rectangle of 200 scanlines over our irradiance pcolormesh plot, and we are going to “roll” it over the plot until it plunges in total darkness.

Let’s setup our plot.

1 | fig, ax = plt.subplots(figsize=(8,6)) |

The magic behind the re-drawing of each frame lies in the set_array function, which redefines the QuadMesh data structure underlying the pcolormesh plot. Because of how the pcolormesh creates the QuadMesh (see definition here), we need to subtract one from each dimension, to make dimensions fit together — this answer on StackOverflow helped me a great deal in understanding why my plot looked funny in my first attempts.

Let’s bring the animation to life and display it in our notebook.

1 | anim = animation.FuncAnimation( |

We can now save the video and send it back home, now that’s something that’s going to make our parents proud!

1 | anim.save(join(output_dir, 'irradiance_pcolormesh_animation.mp4')) |

That’s it for now! I hope you enjoyed this post, feel free to comment if you have questions or remarks.

The Jupyter notebook for this post is available on my GitHub repository.